Hyperscaler companies are pouring billions into AI infrastructure, creating an acute shortage of memory chips that’s reshaping the semiconductor landscape. The spending surge has pushed demand far beyond supply capacity, forcing tech giants to compete for limited inventory.

MarketWatch’s Britney Nguyen and Wichita State University Professor of Management Usha Haley analyzed how this supply crunch is dividing the market into clear winners and losers.

The imbalance stems from AI workloads requiring exponentially more memory than traditional computing tasks.

Memory Suppliers Cash In on Scarcity Premium

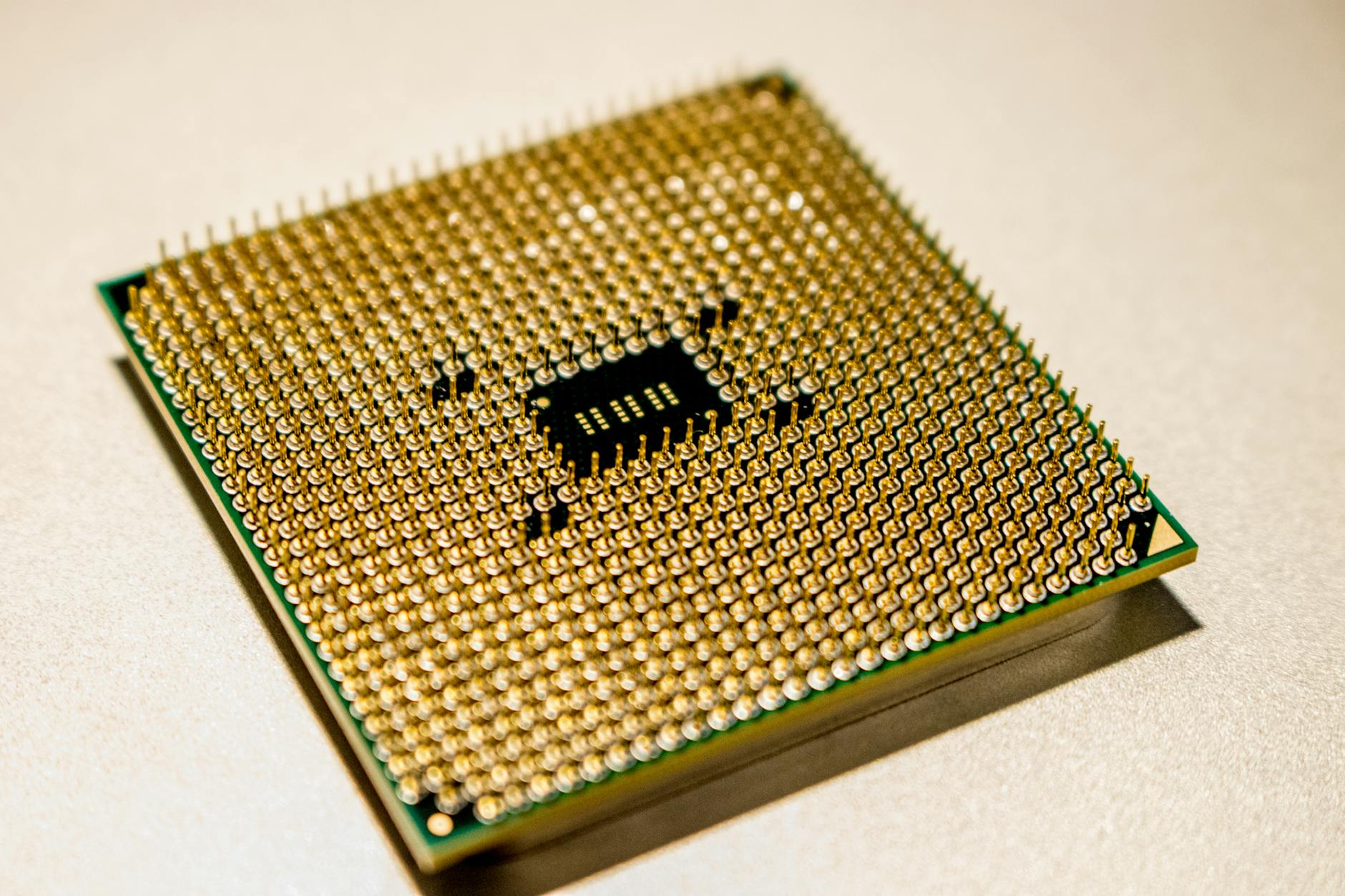

Samsung, SK Hynix, and Micron Technology have emerged as the primary beneficiaries of the shortage. These memory manufacturers are commanding premium prices for high-bandwidth memory (HBM) chips essential for AI processors. Production capacity remains constrained despite ongoing expansion efforts, allowing suppliers to maintain elevated pricing power.

The three companies control roughly 90% of global memory production, giving them substantial leverage over pricing. Samsung leads HBM production with approximately 50% market share, followed by SK Hynix at 30% and Micron at 20%. Each company has announced significant capital expenditure increases to expand manufacturing capacity, but new facilities won’t come online for at least 18 months.

Profit margins on specialized AI memory chips exceed those of standard DRAM by 300-400%. This pricing dynamic has allowed memory suppliers to maintain strong financial performance even as other semiconductor sectors face cyclical downturns. Revenue growth at these companies directly correlates with AI adoption rates across cloud providers and enterprise customers.

Cloud Giants Navigate Supply Chain Bottlenecks

Amazon Web Services, Microsoft Azure, and Google Cloud face mounting pressure to secure memory chip allocations for their AI infrastructure buildouts. These hyperscalers are committing to long-term supply contracts and making advance payments to guarantee access to critical components. The shortage has forced cloud providers to prioritize their highest-value AI customers and delay some infrastructure projects.

Microsoft reportedly secured preferential access to memory chips through a multi-billion dollar advance payment agreement with Samsung. Google has taken a different approach, investing directly in memory manufacturers to ensure supply chain stability. Amazon’s strategy involves diversifying suppliers and developing alternative chip architectures to reduce dependence on scarce HBM modules.

The supply constraints have created a two-tier market where established cloud giants maintain access while smaller competitors struggle to obtain necessary components. This dynamic reinforces the market position of major hyperscalers and makes it increasingly difficult for new entrants to compete in AI infrastructure services. Regional cloud providers in particular report significant delays in expanding their AI offerings due to component shortages.

Smaller Tech Companies Face Mounting Pressures

Startups and mid-tier technology companies find themselves squeezed out of memory chip allocation queues. Without the purchasing power of hyperscalers, these companies face extended lead times and inflated prices for AI-capable hardware. Many have been forced to redesign their products around available components or delay product launches entirely.

Nvidia’s data center customers outside the top tier report difficulty securing complete AI server configurations due to memory shortages. Some companies are purchasing servers without full memory complements, planning to upgrade later when chips become available. This approach increases total system costs and delays time-to-market for AI applications.

The shortage has created opportunities for memory brokers and secondary market dealers who command significant premiums for spot purchases. Gray market prices for HBM chips can exceed official list prices by 200-300%, making it economically challenging for smaller companies to build AI infrastructure. Some firms are exploring cloud-only strategies rather than investing in on-premises AI hardware.

Investment analysts project the memory shortage will persist through 2025, with new production capacity unlikely to match demand growth from expanding AI adoption.